YU-FANG YANG

A Taiwanese cognitive neuroscientist based in Berlin, investigating how social signals shape neural activity, cognition, and behaviour.

Create Your First Project

Start adding your projects to your portfolio. Click on "Manage Projects" to get started

EEG x Drift decision model

Project type

Research

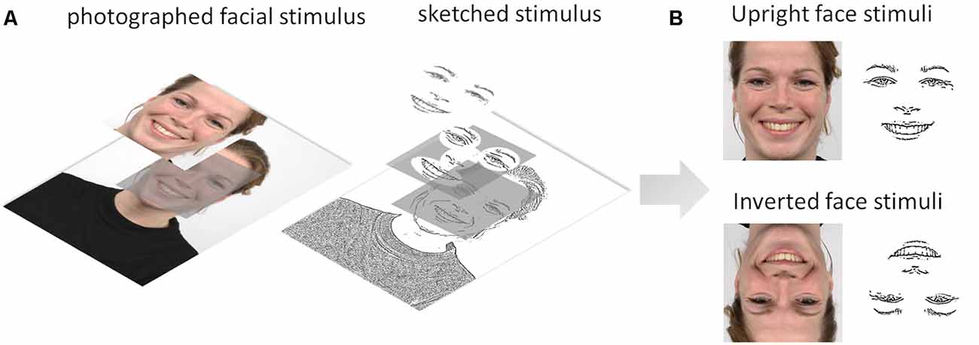

The human brain is tuned to recognize emotional facial expressions in faces having a natural upright orientation. The relative contributions of featural, configural, and holistic processing to decision-making are as yet poorly understood. This study used a diffusion decision model (DDM) of decision-making to investigate the contribution of early face-sensitive processes to emotion recognition from physiognomic features (the eyes, nose, and mouth) by determining how experimental conditions tapping those processes affect early face-sensitive neuroelectric reflections (P100, N170, and P250) of processes determining evidence accumulation at the behavioral level. We first examined the effects of both stimulus orientation (upright vs. inverted) and stimulus type (photographs vs. sketches) on behavior and neuroelectric components (amplitude and latency). Then, we explored the sources of variance common to the experimental effects on event-related potentials (ERPs) and the DDM parameters. Several results suggest that the N170 indicates core visual processing for emotion recognition decision-making: (a) the additive effect of stimulus inversion and impoverishment on N170 latency; and (b) multivariate analysis suggesting that N170 neuroelectric activity must be increased to counteract the detrimental effects of face inversion on drift rate and of stimulus impoverishment on the stimulus encoding component of non-decision times. Overall, our results show that emotion recognition is still possible even with degraded stimulation, but at a neurocognitive cost, reflecting the extent to which our brain struggles to accumulate sensory evidence of a given emotion. Accordingly, we theorize that: (a) the P100 neural generator would provide a holistic frame of reference to the face percept through categorical encoding; (b) the N170 neural generator would maintain the structural cohesiveness of the subtle configural variations in facial expressions across our experimental manipulations through coordinate encoding of the facial features; and (c) building on the previous configural processing, the neurons generating the P250 would be responsible for a normalization process adapting to the facial features to match the stimulus to internal representations of emotional expressions.